The first Goal-Native Anticipatory AI

How I redesigned interaction paradigms to shrink multi-agent AI creation from a months-long struggle into a five-minute task.

role

UX/UI Designer ->

Sole lead UX/UI designer

Team

2 UX/UI designers, Product Manager

Date

2023 - 2025

Status

Shipped V3 MVP (as of Jan 2026)

Overview

As AI became a core competitive advantage, small-to-medium enterprises (SMEs) faced significant barriers to adoption due to:

Dragonscale's mission was to provide a full-stack framework that makes multi-agent AI accessible, deployable, and scalable even for non-technical SME users.

Product evolution

Dragonscale underwent a rapid 3-phase evolution to meet shifting market needs. This case study is all lined with these phases:

1

Phase 1

Full-stack framework for multi-agent AI adoption.

2

Phase 2

AI App Store.

3

Phase 3

Goal-Native Anticipatory AI platform.

A masterclass in building fluid, market-responsive software.

PHASE 1: Full-stack framework for multi-agent AI adoption.

Building the MVP

UX problem

How might we design a platform that empowers non-technical SME users to architect and automate critical workflows using multi-agent AI—without writing a single line of code?

1.1

Design Approach

We began by investigating which design approach could make complex configuration feel intuitive to everyone.

RESEARCH

Goal

Understand user pain points while interacting with existing systems.

Key insights

Multi‑agent orchestration is a systems‑level, intent‑driven, multidimensional problem.

Traditional point-and-click interfaces are step‑driven, parameter‑centric, and fragmented. They force people to think in UI terms instead of intent and outcomes.

For nontechnical users, the constant mental translation between those worlds is the bottleneck.

(This insight laid the foundation for the "goal-native" breakthrough later on.)

Design decision

Enabling natural language configuration - assembling multi-agent AI through words.

IDEATION

Creation of Rustic UI

Given the research results and the findings from market analysis, I co-developed a scalable system that laid the ground for our future conversational UX: a design framework enabling rich multimodal conversational experiences for multi-agent AI.

Includes an internal design system + open-source component library for Figma and React.js.

Rustic UI framework

Future is conversational. Anticipating the future of UX, we designed Rustic UI multi-modal: it handles flexible inputs (text, voice, gesture, uploads) and rich outputs (interactive charts, media, live components).

Impact

- Reduced cognitive load - Expanded user base by upgrading accessibility - Cut developer handoff time by ~40% - Achieved pixel-perfect visual consistency across all surfaces

1.2

Defining the scalable B2B architecture

We started exploring how we could design a single B2B UX framework that is:

scalable,

adaptable to every business’s unique AI solutions,

and capable of serving the diverse needs of multiple organizational layers.

RESEARCH

Goal

Map the typical multi-level organizational structure in target SMEs and identify persona-driven feature needs across layers.

Design decision

Establish a robust information architecture (IA).

As our next step, we created persona profiles and traced each user journey to major interactions and challenges.

Example of a user journey for the engineer persona

IDEATION

Layered Information Architecture

We had to design an enterprise-targeted interface that serves users with drastically different needs - from the CEO to the IT Admin. Not an easy task, but we accepted the challenge.

As a next step, we architected a dual-rail navigation system based on org hierarchy and security permissions:

Information hierarchy

Left navigation panel - Org layer

External controls, organization structure, and high-level platform features. Controlled by highest-level permissions.

Right contextual panel - App layer

In-app controls specific to the active chat or app. Features dynamically surfaced based on user's role and app state.

Security permissions

How this IA benefited the UI

This architecture enabled organizational theming: org colors applied globally, while department-specific themes switch dynamically per chat. This way we ensured strong brand continuity and a cohesive multi-org UI experience.

We roughly outlined an initial information architecture in a low fidelity mockup and got to building the wireframes.

Low-fidelity mockup based on IA

WIREFRAMING

Thanks to our Rustic UI framework, we were able to iterate on wireframing very quickly and with minimal friction.

My role

Apart from co-creating to the overall UX flow, I owned end-to-end creation of the 4 critical features: members, canvas, collections, and multi-level threads.

Wireframes for critical features

1.3

Strategic shift

During the MVP build we observed changing market dynamics and learned that enabling AI creation alone wasn’t sufficient. AI had to be embedded into real professional workflows. And that required taking a new direction.

PHASE 2: App Store

Strategic repositioning & deep domain research

2.1

Expanded platform functionality

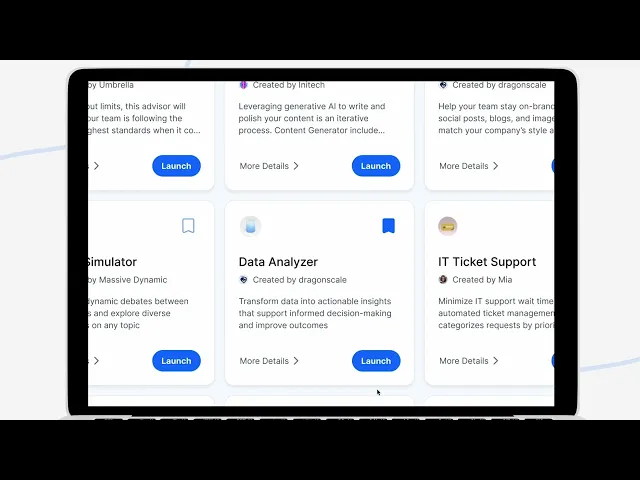

As part of the pivot, we introduced the concept of an app store, where users could create, share, and monetize their apps.

App Store and App Details

Watch this motion‑graphic explainer that I created for the platform's marketing. It translates platform's technical, "invisible" features into tangible visuals and allows to better understand product's value proposition.

Dragonscale platform - Marketing video

My role and product validation

I created and maintained an app store functionality on our Webflow site. The product attracted over 200 sign-ups from early-bird testers within the first 2 weeks.

2.2

Ongoing UX gap

We kept continuously working through the fundamental design challenge: how to make AI creation understandable to non-experts?

Initial MVP test failure

To quickly validate our simplification assumptions, we turned the 40+ parameters required to configure a multi-agent AI into a form-based UI. We then ran multiple user tests with two contrasting personas: developers and non-technical business users. Despite iterating on the design after each round, many fields still demanded technical knowledge - developers mostly completed the flow, while business users abandoned it 96% of the time.

MVP form-based UI

Even though we could continue simplifying this experience by educational onboarding, I knew there had to be a better way, even if we’d have to rethink UX from ground up.

2.3

Creation of PRISM: problem discovery framework

After the failure of form UI, we decided to deeply research how our target users think and what their domain-specific needs are.

I co-developed and later led the application of PRISM (Problem Recognition, Insight, and Solution Mapping) — a custom problem discovery framework designed to surface meaningful real-world needs across domains, rather than solutions.

We applied PRISM across 20+ professionals (HR, finance, law, real estate, etc.) to understand mental models, software stacks, and friction points.

2.4

Decision to pivot

All above and other market factors led us towards a strategic pivot to a goal-native system - the first AI system that ends workflows entirely.

PHASE 3: Goal-Native Anticipatory AI platform

The Goal-Native Breakthrough

3.1

Rebuilding the experience

Most enterprises bolt AI onto old workflows, expecting modern results from legacy design. However, workflows were built around human limits - they assume determinism - and today’s world is indeterministic.

Shift of my role

At the stage of the research with PRISM, I became the Sole UX/UI designer in the company, so I led all design and research initiatives from then onwards.

RESEARCH

Given the novelty of the Goal Planning concept and its emergent nature, we didn't start with traditional generative research. Instead, we adopted a hypothesis-driven design approach. The MVP interface itself was designed as our initial validation tool.

We will be gathering quantitative and qualitative feedback from the first cohort of users to validate our core assumptions about the goal-native experience, which will then inform our 2nd iteration.

The new flow

Dragonscale became the first Goal-Native AI platform. That meant that instead of bolting AI onto old processes and hoping for ROI, user starts by defining the goal itself first.

AI becomes the Goal Coach, that helps the user to properly define their objective. Then, AI crew simulates the best path and executes it autonomously, while automatically creating a multi-agent application in the background.

UX problem

How do we build an intuitive experience that translates a user's abstract objective into a production-ready, multi-agent AI app?

IDEATION

Functional requirements I had to work with:

App creation includes 3 stages: plan, simulate, run.

It's iterative: users will go back to refine goal spec and agent teams frequently.

Each mode displays extensive data users must monitor.

A minimum goal score is required before simulation for best results.

Keeping those in mind, I created 3 concepts to explore.

Concept 1: Unified, dynamic: all three modes in one place; easy iteration from anywhere; chat-first.

Concept 2: Plan + simulate together, run separate: less flexible iteration, but clearer split between testing and execution and easier version tracking; chat-first.

Testing initial ideas

I presented these three concepts to 5 internal business users. During individual interviews, I asked for their initial reaction and feedback on the core task flow around each concept.

Key observations

Users felt that separating modes (concept 2 and 3) slowed them down. All three modes in one unified place made it easier to tweak and re-run fluidly, without feeling like they were “navigating the product” instead of working on the goal.

Dashboard as the primary workspace didn’t match the way most users think. Users naturally framed their work as a conversation, not as hopping between dashboards or apps. In concepts 2 and 3, they worried they’d lose important context.

Most users asked for constant display of goal specification panel.

Design decision

Based on the feedback from users and the stakeholders, I decided to build the interface relying on concept 1.

WIREFRAMING

As the next step I quickly put together some wireframes using our Rustic UI design system.

3.1.1

Plan mode

Interaction:

User: Starts a chat with the Goal Coach (a team of AI agents).

Agents: Ask deep clarifying questions while converting the conversation into a structured goal specification.

Plan mode

3.1.2

simulation mode

Interaction:

User: Clicks on the “Simulate” button and lands in the simulation mode.

Agents: Initiate identifying thousands of potential scenarios for simulation.

Functional specifications to be considered:

The system generates thousands of scenarios.

Each scenario has numerous sub-scenarios.

All scenarios run in parallel.

SUB-TOPIC RESEARCH

I treated simulation mode as a sub-problem requiring specialized research.

Insight

In the initial MVP experience developed for testing, all thousands of scenarios were appearing as chat messages. Therefore, users weren't able to easily scan and analyze scenarios, therefore couldn't evaluate themes coverage and spot scenario gaps.

IDEATION

Given this data, I started defining the UX.

To address the information overload, I came up with a concept of a 4-view scenarios panel: table, themes, timeline, and details - each specifically contributing to easier comprehension of lots of data.

With this design approach, the chat space would be used for exploration, explanation, reasoning, and negotiation only. While the right sidebar is a structured space for seeing, scanning, and deciding (can be manipulated through chat prompts, too).

Table (primary view)

“Show me everything, but sortable and filterable”. Best answers a question “what exactly do we have for X?”

Themes

“Show me the shape of the risk space”. A way to see patterns instead of just lists.

Timeline

“When do things hit us?”. Aggregates scenarios along the timeline showing users when a potential impact is likely to happen.

Details

Single scenario view. This view is where decisions on the scenario are made and recorded.

Simulation panel - entire view

Data visualizations updating in real time, giving users a quick view of which scenarios are already covered and where there are potential gaps.

Simulate mode

3.1.2

Run mode (Deploy & Iterate)

Interaction:

User: Once reached the desired simulation result, clicks “Run” at the “Simulate” step and lands in “Run” mode.

Agents: Instantiated the app is and begins execution of the real-world goal.

Run mode

Final MVP designs

Launch a pre-made multi-agent AI app or create your own

Simulate you goals with GoalOS and have AI build itself for you

Use your own unique multi-agent system

Impact and conclusion

As of January 2026, we haven’t onboarded external production users, yet, so there are no retention/revenue metrics to share.

Current signals come from internal dogfooding, investor/demo sessions, and waitlist signup behavior — validating clarity, demand, and feasibility.

The breakthrough came from redesigning the interaction around what users actually optimize for: goals.

By making goal definition the entry point, using conversation as the intent-capture layer, and pairing it with persistent structured panels for verification, we created an experience where AI orchestration becomes an implementation detail rather than the user's job.

The result is an interaction model that collapses time-to-value and meaningfully expands self-serve capability — moving multi-agent AI from an expert-led project into an outcome-led workflow that teams can adopt in minutes.